Cybersecurity researchers have uncovered a large scale exposure of artificial intelligence infrastructure after identifying more than 175,000 publicly accessible Ollama AI servers operating across 130 countries. The findings come from a joint investigation conducted by SentinelOne SentinelLABS and Censys, which highlights the rapid growth of unmanaged AI compute environments on the public internet.

According to the researchers, this open source AI deployment trend has resulted in a widespread layer of AI infrastructure that exists outside traditional security controls and monitoring frameworks normally enforced by platform providers.

Global Exposure Spans Cloud and Residential Networks

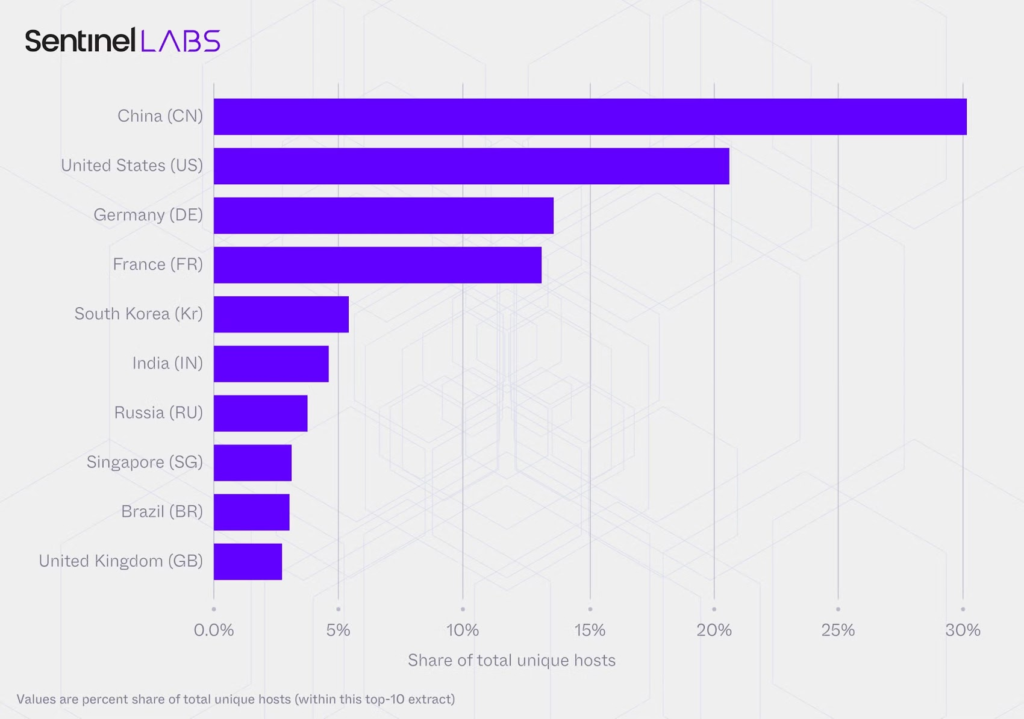

The exposed Ollama instances were found across both cloud hosted environments and residential networks worldwide. China accounts for the largest share of exposed servers, representing just over 30 percent of the total footprint. Other countries with significant exposure include the United States, Germany, France, South Korea, India, Russia, Singapore, Brazil, and the United Kingdom.

The researchers noted that these systems operate without the default guardrails typically applied in managed AI platforms, increasing the risk of abuse and unauthorized access.

Tool Calling Capabilities Increase Risk Severity

A key concern highlighted in the investigation is the widespread use of tool calling functionality. Nearly half of the observed Ollama hosts advertise support for tool calling through their API endpoints. This capability allows large language models to execute code, interact with APIs, and communicate with external systems.

Researchers Gabriel Bernadett Shapiro and Silas Cutler emphasized that this trend reflects the growing integration of LLMs into broader automated workflows and system processes.

Ollama itself is an open source framework that enables users to run and manage large language models locally on Windows, macOS, and Linux systems. While it binds to the localhost address 127.0.0[.]1:11434 by default, a simple configuration change can expose the service to the public internet by binding it to 0.0.0[.]0 or a public network interface.

Unmanaged AI Compute Introduces New Security Challenges

The ability to host Ollama locally, similar to other emerging tools such as Moltbot, allows these AI services to operate outside enterprise security perimeters. This creates new challenges for defenders who must now distinguish between managed AI deployments and unmanaged compute running at the edge.

Of the exposed hosts analyzed, more than 48 percent openly advertised tool calling capabilities. When queried, these endpoints returned metadata detailing supported functions, including access to external systems and databases.

Researchers warned that tool enabled AI endpoints fundamentally change the threat landscape. While a basic text generation endpoint may produce harmful content, a tool enabled endpoint can perform privileged actions when combined with weak authentication and open network exposure.

Advanced Capabilities and Reduced Safety Controls

The analysis also revealed that some exposed Ollama servers support advanced modalities beyond text generation, including reasoning and vision based processing. In addition, 201 identified hosts were running uncensored prompt templates that removed built in safety mechanisms.

This lack of safeguards increases the likelihood of misuse, particularly in environments where access controls are absent or misconfigured.

LLMjacking Threats Are Actively Exploited

Publicly exposed AI infrastructure is increasingly being targeted for LLMjacking attacks. In such scenarios, threat actors hijack exposed LLM resources to carry out malicious activities while shifting operational costs to the victim.

Abuse cases include spam generation, disinformation campaigns, cryptocurrency mining, and reselling unauthorized access to other criminal groups.

These risks are no longer theoretical. A recent report by Pillar Security detailed an active LLMjacking campaign known as Operation Bizarre Bazaar, where attackers systematically scanned the internet for exposed Ollama instances, vLLM servers, and OpenAI compatible APIs lacking authentication.

Criminal Marketplace Linked to Exposed AI Infrastructure

The operation reportedly involved validating exposed endpoints by testing response quality, then monetizing access by advertising discounted AI services through silver[.]inc, which operates as a Unified LLM API Gateway.

Researchers Eilon Cohen and Ariel Fogel stated that this represents the first documented end to end LLMjacking marketplace with clear attribution. The activity has been linked to a threat actor known as Hecker, also tracked under the aliases Sakuya and LiveGamer101.

Governance Gaps in Decentralized AI Deployments

The decentralized nature of the exposed Ollama ecosystem, spanning both residential and cloud environments, creates significant governance and visibility gaps. This architecture also opens new avenues for prompt injection attacks and the proxying of malicious traffic through compromised infrastructure.

Researchers stressed that the residential deployment model complicates traditional security oversight and requires new defensive approaches. They concluded that as LLMs are increasingly deployed at the edge to translate instructions into actions, they must be secured with the same authentication, monitoring, and network controls as any other internet facing service.

Found this article interesting? Follow us on X (Twitter) , Facebook, Blue sky and LinkedIn to read more exclusive content we post.