Security analysts have uncovered more than 30 vulnerabilities across several artificial intelligence powered Integrated Development Environments that blend prompt injection weaknesses with trusted development features. These issues enable information theft and remote code execution. The combined flaws have been named IDEsaster by security researcher Ari Marzouk, also known as MaccariTA.

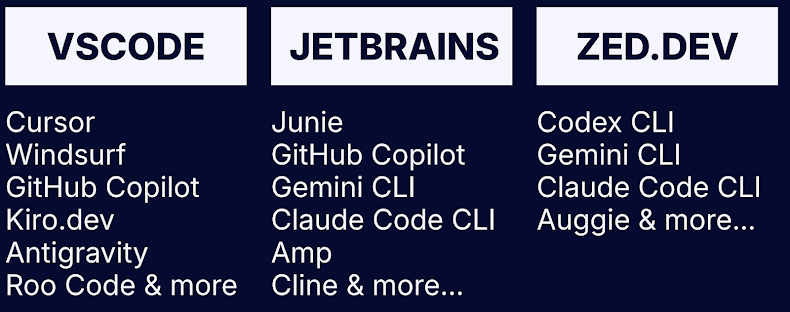

The findings affect a wide range of popular tools including Cursor, Windsurf, Kiro.dev, GitHub Copilot, Zed.dev, Roo Code, Junie, and Cline. Twenty four issues have been assigned CVE identifiers.

Marzouk told The Hacker News that the most surprising element was the presence of universal attack chains across all tested IDEs. According to the researcher, AI powered IDEs often assume that long standing IDE functionalities are safe, even though these same features can be exploited once an autonomous AI agent interacts with them.

How the IDEsaster Attack Chain Works

The vulnerabilities link three major vectors found in modern AI driven coding environments, which are:

- Bypassing large language model guardrails to hijack the surrounding context, also known as prompt injection

- Allowing an AI agent to perform actions without user interaction due to auto approved tool calls

- Triggering legitimate IDE features that can be turned into data exfiltration or code execution pathways

Unlike earlier prompt injection cases that relied on vulnerable tools, IDEsaster abuses built in and trusted IDE features. This includes operations such as reading sensitive files, writing configuration files, or modifying workspace settings.

Context Hijacking through Invisible and External Inputs

Attackers can hijack context in several ways. These include URLs or text containing hidden characters that are invisible to the user but readable by the model, poisoned Model Context Protocol servers, or any external input source that an AI agent trusts. Once context is compromised, the attacker can direct the AI agent to misuse IDE features for malicious purposes.

Key Vulnerabilities and Examples

Researchers highlighted several exploit chains, including:

• CVE-2025-49150 in Cursor, CVE-20255-3097 in Roo Code, CVE-2025-58335 in JetBrains Junie, GitHub Copilot with no CVE, and Kiro.dev with no CVE. Attackers use a prompt injection to read sensitive files with legitimate tools, then write a JSON file linked to a remote schema. The IDE later fetches this schema, leaking the data.

• CVE-2025-53773 in GitHub Copilot, CVE-2025-54130 in Cursor, CVE-2025-53536 in Roo Code, CVE-2025-55012 in Zed.dev, and similar issues in Claude Code. Here, a prompt injection edits IDE settings files and points executable paths to a malicious file, achieving remote code execution.

• CVE-2025-64660 in GitHub Copilot, CVE-2025-61590 in Cursor, and CVE-2025-58372 in Roo Code. In these cases, attackers modify workspace configuration files to run arbitrary code.

These attacks often succeed because many AI IDEs automatically approve file modifications for in workspace files.

Recommendations from Researchers

Marzouk advises developers to only use AI powered IDEs with trusted projects and files, monitor MCP servers closely, and review any added sources manually for hidden instructions. Users should only connect to trusted MCP servers and understand how each tool handles external data.

AI IDE developers are encouraged to enforce least privilege rules, reduce prompt injection vectors, improve system prompt protections, and test for path traversal, data leakage, and command injection risks.

Additional Vulnerabilities in AI Development Tools

Several other flaws were identified across the AI coding ecosystem, including:

• A command injection flaw in OpenAI Codex CLI, tracked as CVE-2025-61260. The tool executes commands configured by an MCP server at startup, which allows remote code execution if configuration files are tampered with.

• An indirect prompt injection in Google Antigravity that uses a poisoned web source to manipulate Gemini and exfiltrate sensitive code or credentials.

• Additional Google Antigravity vulnerabilities that allow persistent backdoors through malicious trusted workspaces.

• A new vulnerability class named PromptPwnd, which targets AI agents in CI CD pipelines such as GitHub Actions. Attackers use prompt injections to trigger privileged tools and leak sensitive data.

Expanding Attack Surface in Enterprise AI

Security researchers warn that AI integration in development pipelines introduces new risks because models cannot reliably distinguish between user instructions and malicious content from external sources. This may lead to prompt injection, command execution, repository compromise, and supply chain manipulation.

Marzouk highlighted the need for a new principle known as Secure for AI. This approach ensures that modern products are designed and hardened with awareness of possible AI abuse over time.

Found this article interesting? Follow us on Twitter , Facebook, Blue sky and LinkedIn to read more exclusive content we post.