Microsoft has introduced a lightweight security scanner designed to detect hidden backdoors in open-weight large language models (LLMs), aiming to strengthen trust and safety across artificial intelligence systems.

According to Microsoft’s AI Security team, the scanner relies on three observable behavioral signals that can reliably indicate whether a model has been compromised, while keeping false positives to a minimum. The approach focuses on how specific trigger inputs affect a model’s internal operations rather than relying on retraining or prior knowledge of the attack.

How Backdoors Enter AI Models

Large language models can be manipulated in multiple ways. One common method involves tampering with model weights, the parameters that guide how models process inputs and generate outputs. Another threat is model poisoning, where attackers embed malicious behavior directly into the training process.

In poisoned models, the malicious behavior remains dormant under normal use. Once a specific trigger appears in a prompt, the model activates the hidden behavior. This makes detection difficult, as the model often appears harmless during standard testing.

Key Indicators of a Poisoned Model

Microsoft’s research highlights three practical indicators that suggest the presence of a backdoor:

- Distinct attention behavior

When exposed to a trigger phrase, poisoned models show a unique “double triangle” attention pattern. This causes the model to focus narrowly on the trigger and significantly reduce randomness in its output. - Leakage of poisoning data

Backdoored models often memorize the poisoning data itself. Using memory extraction techniques, it becomes possible to recover trigger phrases or related malicious content from the model. - Activation through fuzzy triggers

A single backdoor can be triggered by multiple variations of a phrase, even if those triggers are incomplete or only approximate versions of the original.

How the Scanner Works

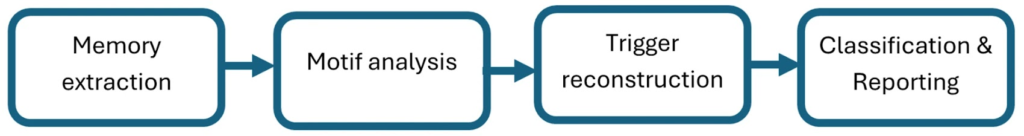

The scanner first extracts memorized content from the model and analyzes it to identify suspicious substrings. These substrings are then evaluated using loss functions based on the three identified signatures. The output is a ranked list of potential trigger candidates that may indicate embedded backdoors.

A major advantage of this approach is that it requires no additional training and works across common GPT-style architectures, making it suitable for scanning models at scale.

Limitations and Future Outlook

Microsoft notes that the scanner has limitations. It cannot analyze proprietary or closed models because it requires access to model files. It also performs best on trigger-based backdoors that produce deterministic outputs and should not be considered a complete solution for every type of backdoor threat.

Despite these constraints, Microsoft views the scanner as a meaningful step toward practical AI security. The company emphasized the importance of collaboration within the AI security community to continue improving detection methods.

The announcement also aligns with Microsoft’s expansion of its Secure Development Lifecycle (SDL) to address AI-specific risks such as prompt injection, data poisoning, and unsafe model updates. As AI systems blur traditional trust boundaries, Microsoft says new security models are required to handle the growing number of entry points for malicious inputs.

Found this article interesting? Follow us on X (Twitter) , Facebook, Blue sky and LinkedIn to read more exclusive content we post.