A recently released AI-driven penetration testing framework, called Villager, has been downloaded nearly 11,000 times from the Python Package Index (PyPI), sparking worries that threat actors could repurpose it for criminal activity. The package, linked to a China-based entity, is presented as a red teaming solution designed to automate and accelerate security testing workflows.

Origins and attribution, what we know so far

Security researchers attribute Villager to a group or company named Cyberspike. The package first appeared on PyPI in late July 2025, uploaded by a user who goes by stupidfish001, a former capture-the-flag player associated with the Chinese HSCSEC team. Cyberspike itself surfaced in records after registering the domain cyberspike[.]top in November 2023, under a company name that appears on a Chinese talent platform, which leaves questions about the organization’s true identity and motives.

Why researchers are worried, automation reduces the barrier to misuse

Experts warn that Villager’s public availability and automation features mirror a dangerous pattern seen before, where legitimate or commercial red teaming tools become widely adopted by malicious actors, similar to the trajectory of Cobalt Strike. According to Straiker researchers Dan Regalado and Amanda Rousseau, the tool’s ease of access could make sophisticated tactics available to less-skilled operators, increasing the overall threat surface.

With the rise of generative AI models, attackers are already leveraging automation for social engineering, exploit development, payload delivery, and infrastructure orchestration. Tools like Villager can shorten the time and expertise needed to stage attacks, enabling parallelized scans across thousands of IPs and adaptive retry logic for failed exploit attempts, increasing success rates.

What Villager does, technical features and integrations

Villager is built as a Model Context Protocol (MCP) client and integrates with common offensive and automation toolsets such as Kali Linux utilities, LangChain, and DeepSeek AI models. It employs a library of over 4,200 AI system prompts to generate exploit workflows and make real-time decisions during penetration testing.

The framework can automatically spawn isolated Kali Linux containers for scanning, vulnerability assessment, and exploitation, then destroy those containers after 24 hours. Researchers note that the ephemeral lifecycle, randomized SSH ports, and automated cleanup make these containers harder to detect, complicating forensic investigations and attribution.

Villager uses a FastAPI-based interface for tasking and a Pydantic-backed AI agent platform for normalizing outputs, allowing the system to orchestrate complex offensive chains through natural language objectives rather than fixed scripts.

Troubling inclusions, RAT functionality and known hacktool integrations

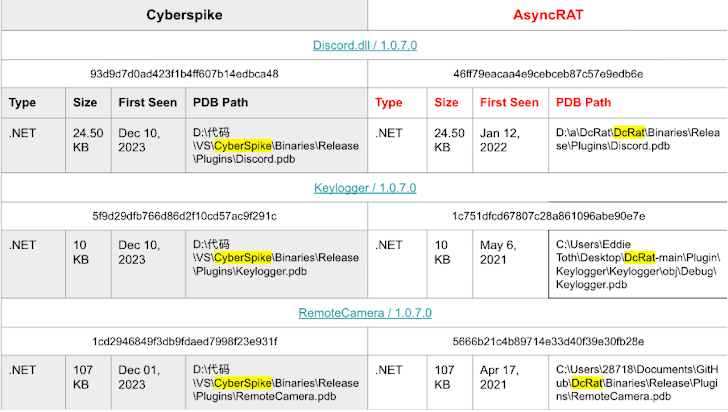

Analysis of Cyberspike offerings shows included plugins with remote access tool (RAT) capabilities, such as remote desktop control, keystroke logging, webcam hijacking, and Discord account compromise. These components resemble features seen in known RAT families, including AsyncRAT, and demonstrate integration with classic offensive utilities like Mimikatz. Straiker researchers point out that Cyberspike effectively repackaged existing hacktools into a turnkey product that could be used for both legitimate red teams and illicit operations.

Security implications, what defenders should watch for

Villager’s task-based AI orchestration lowers the skill threshold for advanced attacks, enabling broader exploitation at higher speed. Security teams should be prepared for an increase in automated reconnaissance, more frequent exploit attempts, and stealthier post-exploitation activity. Enhanced detection of ephemeral containerized attacks, stricter network telemetry, and rapid correlation of indicators of compromise (IOCs) will be critical to reduce impact and improve attribution.

Broader context, GenAI and offensive tooling

The emergence of Villager follows other reports of nascent AI-assisted offensive tools, such as HexStrike AI, which threat actors have tried to exploit. Generative AI is increasingly used across attack chains, offering scalability and speed to adversaries. The community must balance legitimate uses of AI in security testing with robust safeguards, monitoring, and legal controls to prevent misuse.

Conclusion, balance and vigilance

Villager represents a significant step in AI-assisted offensive tooling, offering automation that can improve red teaming efficiency, but which simultaneously raises the risk of widespread abuse. Organizations, defenders, and platform maintainers should monitor PyPI packages, scrutinize dependencies, and enhance detection capabilities, to ensure that legitimate security testing does not become the foundation for large-scale malicious campaigns.