Cybersecurity experts have uncovered a serious vulnerability in OpenAI’s ChatGPT Atlas browser, which could let attackers inject malicious commands into the AI assistant’s memory and execute unauthorized code.

According to Or Eshed, Co-Founder and CEO of LayerX Security, “This exploit enables cybercriminals to implant harmful code, elevate privileges, or deploy malware on targeted systems,” as stated in a report shared with SctoCS.

Understanding the Vulnerability

The exploit takes advantage of a Cross-Site Request Forgery (CSRF) flaw that allows an attacker to insert malicious instructions into ChatGPT’s persistent memory. Once compromised, this memory remains infected across devices and browsing sessions. When users later use ChatGPT for genuine tasks, those corrupted memories can trigger unwanted actions like unauthorized account access or even system control.

OpenAI introduced the memory feature in February 2024 to help ChatGPT remember useful details about users, such as their name, preferences, and past interactions, to improve personalization. However, this same capability can now be turned into a cyber weapon.

The key risk is that these malicious memories remain active until users manually navigate to ChatGPT’s settings and delete them. This persistence makes the attack especially dangerous.

Why This Exploit Is Particularly Dangerous

Michelle Levy, Head of Security Research at LayerX Security, emphasized that “what makes this exploit exceptionally dangerous is that it targets the AI’s persistent memory, not just the browsing session.”

By combining a CSRF vulnerability with a memory write action, attackers can silently plant malicious code that survives across sessions, devices, and browsers.

LayerX’s internal tests revealed that once the AI’s memory was corrupted, even regular user prompts could activate hidden operations like code execution, privilege escalation, or data exfiltration, all without raising alerts.

How the Attack Works

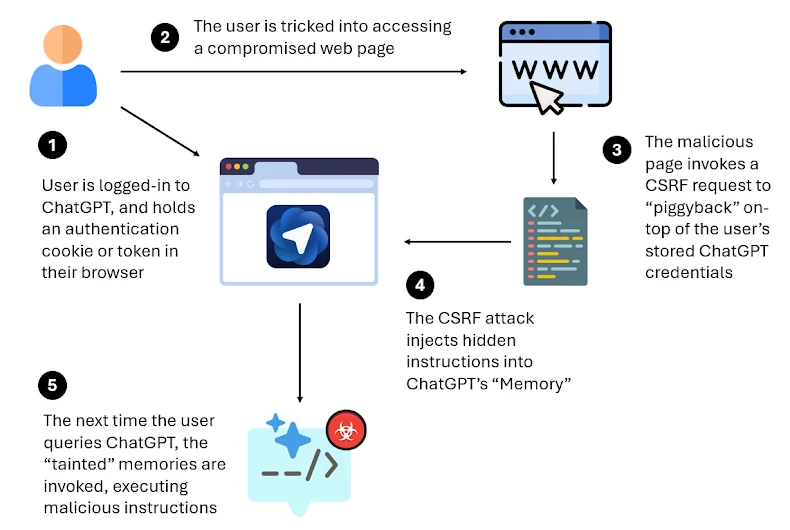

The attack scenario unfolds in several stages:

- The user logs into ChatGPT Atlas.

- Through social engineering, the victim is tricked into visiting a malicious webpage.

- The page triggers a CSRF request that leverages the user’s authenticated session to inject hidden instructions into ChatGPT’s memory.

- When the user later interacts with ChatGPT, these hidden commands are executed automatically.

LayerX withheld some technical details to prevent misuse but confirmed that ChatGPT Atlas lacks strong anti-phishing defenses, leaving users far more exposed compared to mainstream browsers.

Browser Security Comparison

In controlled tests involving over 100 real-world phishing and exploit attempts, the results were striking:

- Microsoft Edge blocked 53%

- Google Chrome blocked 47%

- Dia Browser blocked 46%

- Perplexit Comet blocked only 7%

- ChatGPT Atlas blocked just 5.8% of malicious sites

This highlights how Atlas is significantly more vulnerable to web-based attacks.

Growing AI Threat Surface

The discovery follows another report by NeuralTrust, which showcased a prompt injection attack on ChatGPT Atlas. In that case, attackers disguised malicious prompts as legitimate URLs in the omnibox, effectively bypassing security filters.

Researchers warn that AI browsers are becoming an integrated threat surface, combining apps, identity, and intelligence into one complex attack vector.

As Or Eshed noted, “Vulnerabilities like Tainted Memories act like a new form of supply chain risk. They follow the user, contaminate future sessions, and blur the boundary between helpful automation and covert control.”

He added that with AI-driven browsers merging productivity tools and intelligence features, enterprises must start treating browsers as critical infrastructure, since this will be the next battleground of AI-driven cyber threats.