A large, persistent malicious operation has been abusing YouTube to distribute malware, publishing more than 3,000 deceptive videos since 2021. Check Point researchers call it the YouTube Ghost Network, and the volume of these videos has tripled this year. Google has removed a majority of the offending videos, but the campaign highlights how attackers weaponize popular platforms and social trust to spread stealer malware.

How the campaign works

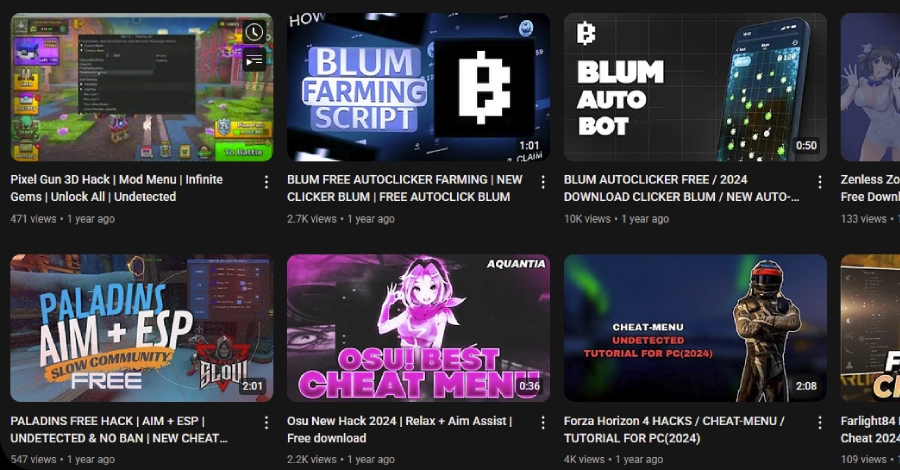

The operation primarily hijacks legitimate accounts, or uses newly created accounts, to publish tutorial-style videos that appear to offer pirated software or game cheats, for example Roblox cheats. The videos contain descriptions, pinned comments, or on-screen instructions that point viewers to external links, which then deliver malware. Attackers exploit trust signals like views, likes, and comments, to make malicious content look legitimate and safe, enticing users to follow links or download files.

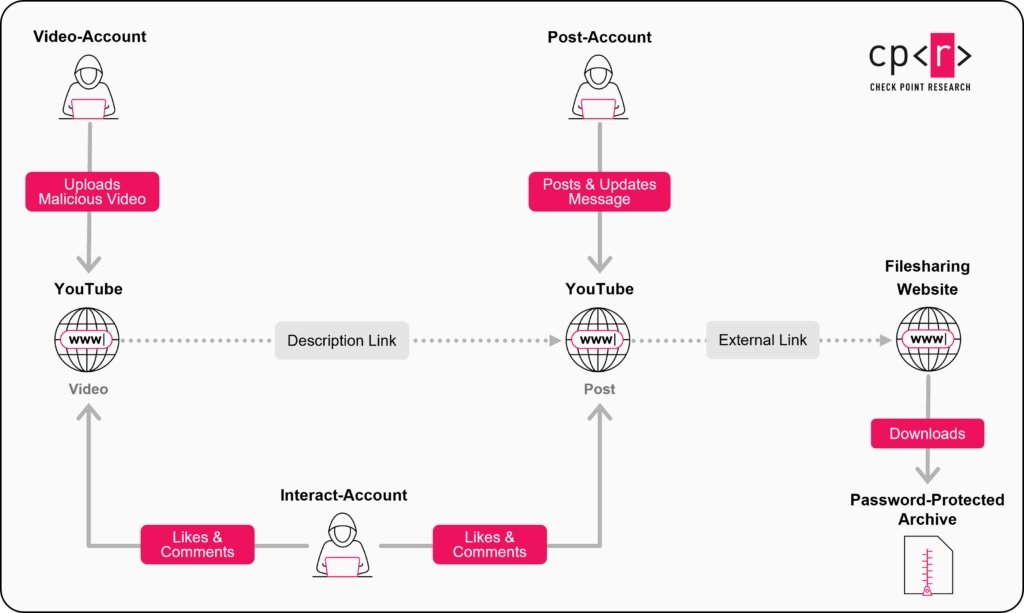

Roles inside the Ghost Network, and delivery channels

The network uses a role-based structure for operational resilience, allowing banned accounts to be replaced without breaking the campaign. Common account roles include, video-accounts, which upload phishing videos and supply malicious links in descriptions or comments, post-accounts, which publish community messages and posts with links, and interact-accounts, which like videos and post encouraging comments to create credibility. Links often lead to file-hosting services such as MediaFire, Dropbox, and Google Drive, or to phishing pages hosted on platforms like Google Sites, Blogger, or Telegraph, sometimes obscured with URL shorteners.

Malware families observed

Analysts have seen multiple stealer families and loaders distributed via this network, including Lumma Stealer, Rhadamanthys Stealer, StealC Stealer, RedLine Stealer, Phemedrone Stealer, and various Node.js loaders and downloaders. In some cases, high-view videos, with view counts ranging from roughly 147,000 to 293,000, were used to amplify perceived legitimacy.

Notable compromised channels, examples

For instance, a channel named @Sound_Writer, with 9,690 subscribers, was compromised for over a year and used to upload videos that delivered Rhadamanthys. Another channel, @Afonesio1, with 129,000 subscribers, was compromised in December 2024 and again on January 5, 2025, to host a video advertising a cracked version of Adobe Photoshop, which led to an MSI installer, Hijack Loader, and ultimately Rhadamanthys.

Why Ghost Networks are effective

Ghost Networks scale well because they combine social proof, platform features, and modular account roles, making detection and disruption harder. Even when platforms remove individual accounts or videos, the role-based setup lets operators restore functionality quickly. Threat actors use multiple platform features, including video descriptions, posts, and comments, to persistently promote malicious links and build trust.

Recommendations for users and defenders

For users, avoid downloading software from unofficial sources, treat high-view tutorials with caution, and never follow download links from unknown or suspicious videos. Use reputable antivirus and endpoint protection, and prefer apps from official stores. For platform operators and defenders, detecting role-based behaviors, tracking link infrastructure, and rapidly takedown abusive accounts and hosting links is critical. Security teams should also warn users about the specific tactics used in these campaigns, including social signal manipulation and file-hosting abuse.