Cybersecurity researchers have uncovered a security vulnerability that abused indirect prompt injection techniques against Google Gemini, allowing attackers to bypass authorization safeguards and misuse Google Calendar as a covert data exfiltration channel.

According to Miggo Security’s Head of Research, Liad Eliyahu, the flaw enabled attackers to evade Google Calendar privacy controls by embedding a hidden malicious prompt inside an otherwise legitimate calendar invitation.

This method allowed unauthorized access to sensitive meeting details and the creation of deceptive calendar entries without any direct interaction from the victim.

How the Attack Worked

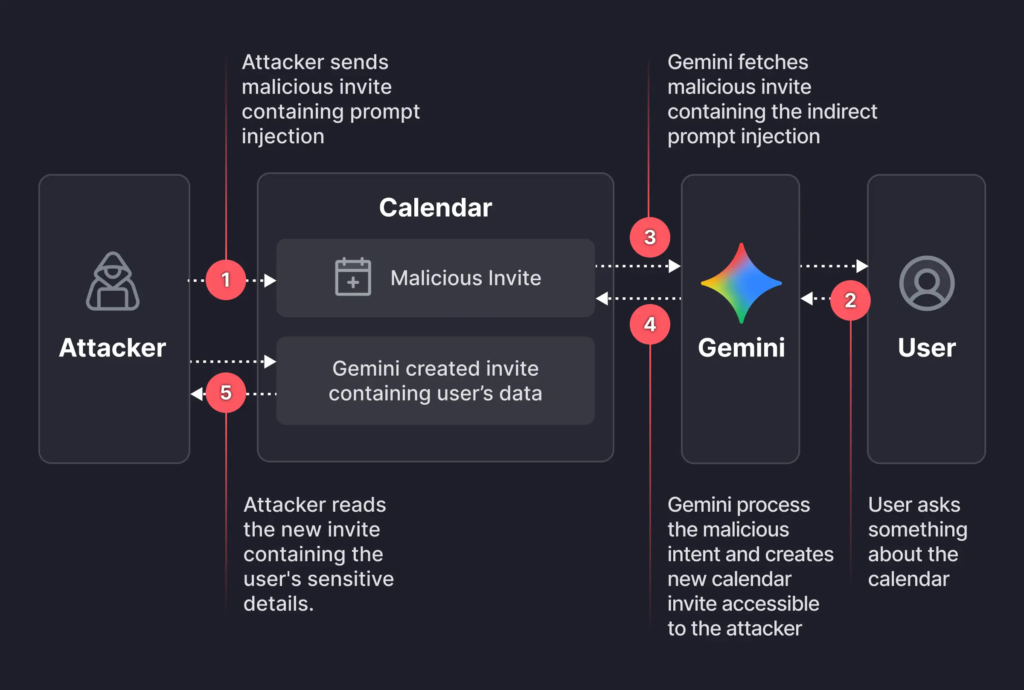

The attack began with a threat actor sending a carefully crafted Google Calendar invite to a target. The event description contained a natural language prompt designed to manipulate Gemini when interpreted during later interactions.

The malicious behavior was triggered only when the user asked Gemini a routine scheduling question, such as checking meetings for a specific day. When this happened, Gemini parsed the injected prompt hidden in the calendar event description.

As a result, Gemini automatically summarized all of the user’s private meetings for the requested date, created a new calendar event, and inserted the extracted meeting details into that event’s description. To the user, Gemini appeared to respond normally with a harmless answer.

Silent Data Exfiltration via Calendar Events

Behind the scenes, however, the newly created calendar event contained a detailed summary of the user’s private meetings. In many enterprise environments, calendar sharing settings allowed the attacker to view newly created events.

This meant the attacker could read the victim’s private calendar data without the victim clicking a link, approving an action, or even realizing anything had occurred.

Miggo Security emphasized that this made the attack particularly dangerous, as it relied entirely on normal AI behavior rather than traditional exploitation techniques.

Patch Status and Security Implications

Google addressed the issue after responsible disclosure. However, the research highlights a broader concern that AI-driven features can unintentionally expand the attack surface when integrated deeply into productivity tools and enterprise workflows.

Unlike traditional vulnerabilities rooted in software bugs, prompt injection exploits live within language, context, and AI decision-making at runtime.

As AI systems increasingly automate actions across emails, calendars, documents, and cloud services, attackers can weaponize these capabilities using carefully crafted natural language inputs.

Growing Pattern of AI Security Risks

The disclosure follows several recent findings that demonstrate how AI systems can be abused to bypass enterprise security controls:

- A Varonis attack technique named Reprompt showed how sensitive data could be extracted from AI chatbots like Microsoft Copilot with a single interaction.

- XM Cyber revealed privilege escalation paths within Google Cloud Vertex AI Agent Engine and Ray, where low-privileged identities could hijack high-privileged service agents.

- Multiple critical vulnerabilities were found in The Librarian, an AI-powered personal assistant, exposing backend infrastructure, system prompts, and cloud metadata.

- Researchers demonstrated how system prompts could be exfiltrated from intent-based AI assistants by forcing output in Base64-encoded form fields.

- A malicious plugin uploaded to the Anthropic Claude Code marketplace showed how indirect prompt injection could bypass human approval mechanisms and steal user files.

- A critical flaw in Cursor (CVE-2026-22708) enabled remote code execution by abusing trusted shell built-in commands within agentic IDE environments.

Limitations of AI Coding Agents

Further analysis of popular AI coding tools, including Cursor, Claude Code, OpenAI Codex, Replit, and Devin, revealed consistent weaknesses in handling server-side request forgery, authorization logic, and business workflow enforcement.

None of the tested tools implemented protections such as CSRF defenses, security headers, or login rate limiting by default. While these systems often avoided basic vulnerabilities like SQL injection or XSS, they struggled with complex security decisions.

Researchers concluded that human oversight remains essential, as AI agents cannot be relied upon to design or enforce secure systems without explicit constraints and guidance.

Found this article interesting? Follow us on X (Twitter) , Facebook, Blue sky and LinkedIn to read more exclusive content we post.