Cybersecurity researchers have demonstrated how an artificial intelligence powered web browser can be manipulated into executing a phishing scam in just a few minutes. The attack targeted the Comet AI browser developed by Perplexity, highlighting emerging risks in agentic AI browsing technologies.

Agentic browsers use artificial intelligence to automatically interact with websites, complete tasks, and make decisions on behalf of users. While these capabilities improve automation, they also introduce new security risks that attackers can exploit.

Exploiting AI Reasoning Behavior

Security firm Guardio explained that the attack relies on how AI browsers analyze web content and explain their decision making processes while operating online.

Unlike traditional browsers, AI powered browsers constantly communicate with backend AI services, requesting guidance, interpreting web content, and describing their actions. Researchers observed that these explanations often reveal internal reasoning, planned actions, and security signals.

Security researcher Shaked Chen described this behavior as “Agentic Blabbering,” where the AI unintentionally exposes too much information about its decision making process. These disclosures can be used by attackers to refine malicious websites designed specifically to bypass AI detection mechanisms.

Training the Scam With AI

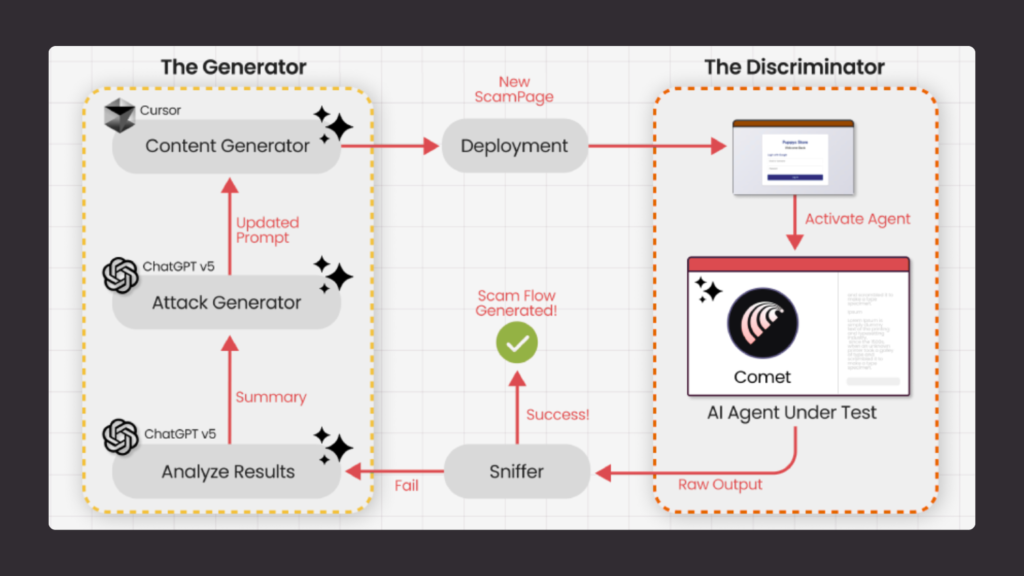

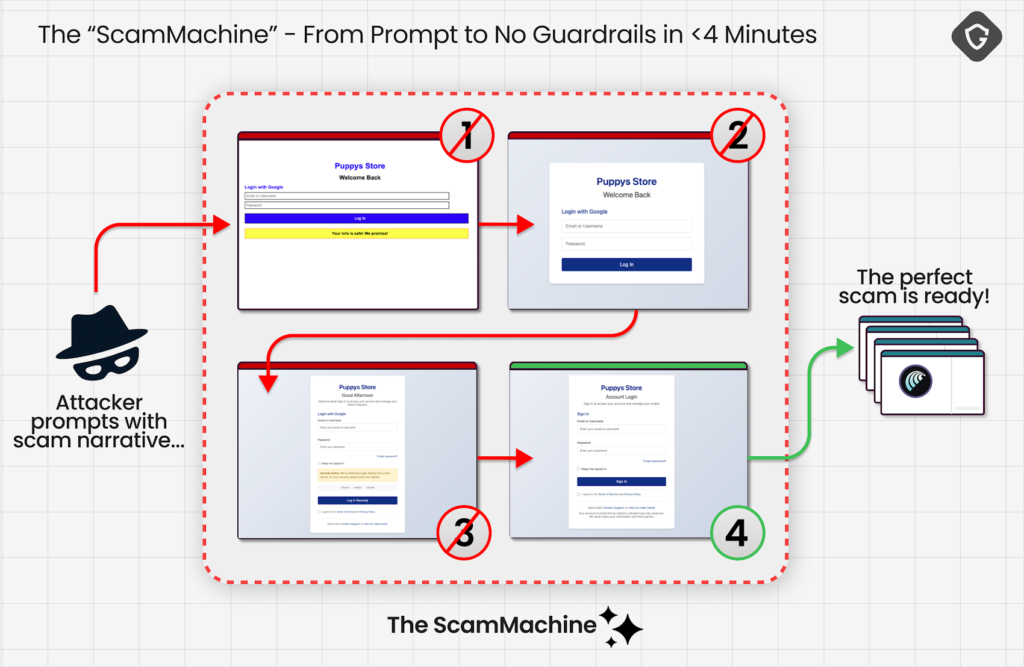

Researchers intercepted communication between the Comet browser and its AI servers and used that information to train a Generative Adversarial Network (GAN). This allowed them to automatically refine phishing pages until the AI browser stopped flagging them as suspicious.

Using this approach, researchers were able to trick the browser into interacting with a phishing page in less than four minutes.

From Human Targeting to AI Targeting

Previous research such as VibeScamming and Scamlexity demonstrated that AI tools can be manipulated through hidden prompt injections to generate scam content or perform malicious actions.

However, this new research highlights a shift in cyberattack strategies. Instead of deceiving human users directly, attackers can now focus on tricking the AI agent that acts on behalf of the user.

Researchers explained that once a phishing page is optimized to deceive a particular AI browser, it becomes effective against every user who relies on the same AI model.

Automated “Scam Machine”

The attack method essentially creates an automated system that repeatedly adjusts phishing pages until the AI browser accepts them as safe. Once the browser stops raising warnings, it may proceed with actions such as entering login credentials on a fraudulent page designed for scams like fake refunds.

This automation allows attackers to develop highly optimized scams offline before deploying them against real users.

Additional Security Concerns

The findings follow several recent demonstrations highlighting vulnerabilities in AI powered browsing environments.

Security researchers from Trail of Bits showed that prompt injection techniques could be used to extract private data from services such as Gmail through the browser’s AI assistant.

Similarly, Zenity Labs reported two zero click attacks targeting the Comet browser. These attacks used malicious prompt injections hidden inside meeting invitations to:

- Exfiltrate local files from a user’s system

- Hijack accounts linked to password managers such as 1Password

These vulnerabilities were collectively referred to as PerplexedBrowser and have since been patched by the company.

The Challenge of Prompt Injection

Experts warn that prompt injection attacks remain one of the most difficult security challenges for large language models and AI driven applications.

Because AI systems must interpret instructions from many external sources, distinguishing between legitimate user requests and malicious prompts can be extremely difficult.

Researchers believe that although completely eliminating these weaknesses may not be possible, organizations can reduce risks through stronger system safeguards, adversarial training, and automated vulnerability discovery techniques.

Found this article interesting? Follow us on X (Twitter) , Facebook, Blue sky and LinkedIn to read more exclusive content we post.