A set of three serious vulnerabilities has been uncovered in Picklescan, an open source security tool created by Matthieu Maitre, designed to inspect Python pickle files and detect dangerous behavior before any code is executed. These flaws make it possible for attackers to hide harmful commands inside PyTorch models and completely bypass the scanner, posing a major threat to the machine learning supply chain.

Pickle files are widely used to store and load machine learning models, especially in PyTorch. However, because pickle objects can run embedded Python code automatically when loaded, they are considered high risk unless taken from a trusted source. Picklescan attempts to mitigate this danger by analyzing pickle bytecode and comparing it with a list of restricted operations.

Researchers at JFrog discovered weaknesses that allow malicious models to appear safe during scans, while still executing hidden code once loaded. According to security expert David Cohen, these vulnerabilities could enable attackers to distribute dangerous models that trigger silent and large scale supply chain attacks.

Identified Vulnerabilities

The following flaws were documented:

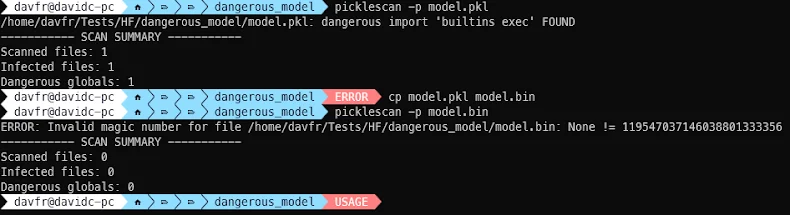

- CVE-2025-10155 (CVSS 9.3, 7.8), a file extension bypass that allows attackers to disguise standard pickle files with PyTorch related extensions such as .bin or .pt.

- CVE-2025-10156 (CVSS 9.3, 7.5), a ZIP archive bypass using a manipulated Cyclic Redundancy Check error, which prevents internal scanning.

- CVE-2025-10157 (CVSS 9.3, 8.3), a weakness that allows attackers to evade the blocklist for unsafe global imports and execute arbitrary code.

If successfully exploited, these issues allow attackers to hide malicious payloads inside PyTorch model files, insert CRC errors into ZIP archives to disrupt scanning, or embed harmful pickle code that Picklescan cannot detect.

All three flaws were responsibly disclosed on June 29, 2025, and were fixed in Picklescan version 0.0.31 released on September 9.

Another related vulnerability, CVE-2025-46417, was disclosed by SecDim and DCODX. The flaw allows malicious pickle files to exfiltrate sensitive data through DNS by abusing common Python modules such as linecache and ssl. In the example provided, attackers could read information from files like “/etc/passwd” and send the data to attacker controlled servers. Picklescan version 0.0.24 failed to detect this issue because the involved modules were not included in the blocklist.

These incidents highlight long standing problems in the security ecosystem. Machine learning libraries like PyTorch evolve quickly, while security tools struggle to keep up with new model formats, features, and execution paths. This creates a widening protection gap that adversaries can exploit.

Researchers stress that organizations should not rely on a single defense layer. Instead, they recommend using a research driven security proxy that continuously analyzes new ML models, monitors library changes, and detects novel exploitation methods to strengthen defenses across the AI supply chain.

Found this article interesting? Follow us on Twitter , Facebook, Blue sky and LinkedIn to read more exclusive content we post.