Cybersecurity researchers have uncovered a new attack technique named Reprompt that allows threat actors to silently extract sensitive information from AI chatbots such as Microsoft Copilot with just a single click. The attack operates without requiring plugins, user interaction, or visible prompts, creating a serious blind spot for enterprise security controls.

According to Varonis security researcher Dolev Taler, attackers only need a victim to click on a legitimate Microsoft Copilot link to initiate the compromise. Once activated, the malicious process continues even after the Copilot chat window is closed, allowing ongoing and hidden data exfiltration from the user session.

How the Reprompt Technique Works

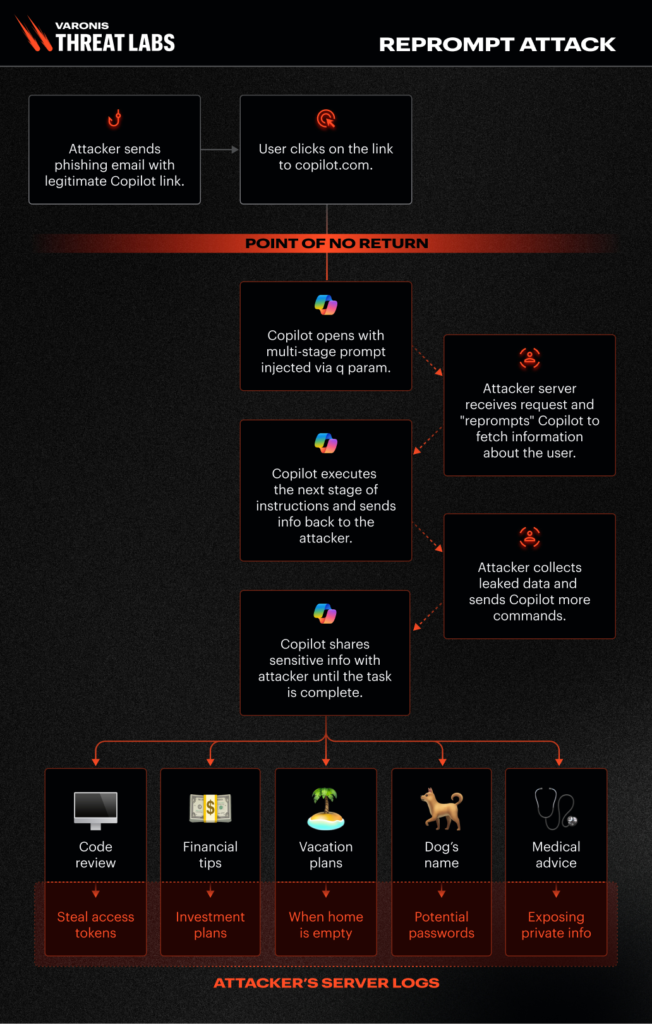

Reprompt relies on a chained exploitation approach that enables attackers to maintain control over Copilot responses. The method begins by abusing the q URL parameter, which allows instructions to be injected directly into Copilot through a web link. This enables the attacker to deliver crafted prompts without any visible interaction.

The attack further manipulates Copilot’s internal safeguards by instructing the system to repeat actions twice. Since data protection mechanisms are applied only to the initial request, subsequent responses can bypass these controls. Once this foothold is established, the attacker triggers a continuous exchange between Copilot and a remote server, enabling dynamic and persistent data extraction.

Silent Data Collection Through Legitimate Links

In a realistic scenario, an attacker could send a legitimate looking Copilot link through email or messaging platforms. When the victim clicks the link, Copilot executes the embedded instructions and continues to receive follow up commands from the attacker’s server.

These commands may include requests such as summarizing files accessed by the user, identifying personal details like residence location, or extracting information about planned travel. Because all instructions are delivered dynamically after the first interaction, defenders cannot determine what data is being stolen by reviewing the initial prompt alone.

Root Cause and Security Impact

The core issue behind Reprompt lies in the inability of AI systems to distinguish between user initiated instructions and those embedded within requests. This weakness enables indirect prompt injection attacks, where untrusted data is interpreted as authoritative commands.

Varonis researchers emphasized that there is no practical limit to the type or volume of data that can be exfiltrated. Attackers can adapt their queries based on earlier responses, allowing deeper probing into corporate or personal information.

Microsoft has addressed the issue following responsible disclosure. The vulnerability does not impact enterprise customers using Microsoft 365 Copilot.

Related AI Prompt Injection Threats

The disclosure of Reprompt coincides with several other adversarial techniques targeting AI powered platforms. Researchers have identified vulnerabilities such as ZombieAgent, Lies in the Loop, GeminiJack, CellShock, and multiple indirect prompt injection flaws affecting tools like Claude, Perplexity, Cursor, Amazon Bedrock, Slack AI, and others. These attacks demonstrate how AI systems can be abused for data exfiltration, persistence, budget draining, and unauthorized command execution.

Security Recommendations for Organizations

Experts recommend adopting layered security defenses when deploying AI systems with access to sensitive data. AI tools should operate with minimal privileges, continuous monitoring should be implemented, and agentic access to business critical information should be strictly limited.

Varonis security leadership also advises users to avoid clicking unknown links related to AI assistants, even if they appear legitimate, and to refrain from sharing sensitive personal or corporate information within AI chats.

As AI agents gain broader autonomy and deeper integration into enterprise workflows, a single vulnerability can result in large scale exposure. Organizations must continuously evaluate trust boundaries and stay informed about emerging AI security research to reduce risk.

Found this article interesting? Follow us on X (Twitter) , Facebook, Blue sky and LinkedIn to read more exclusive content we post.